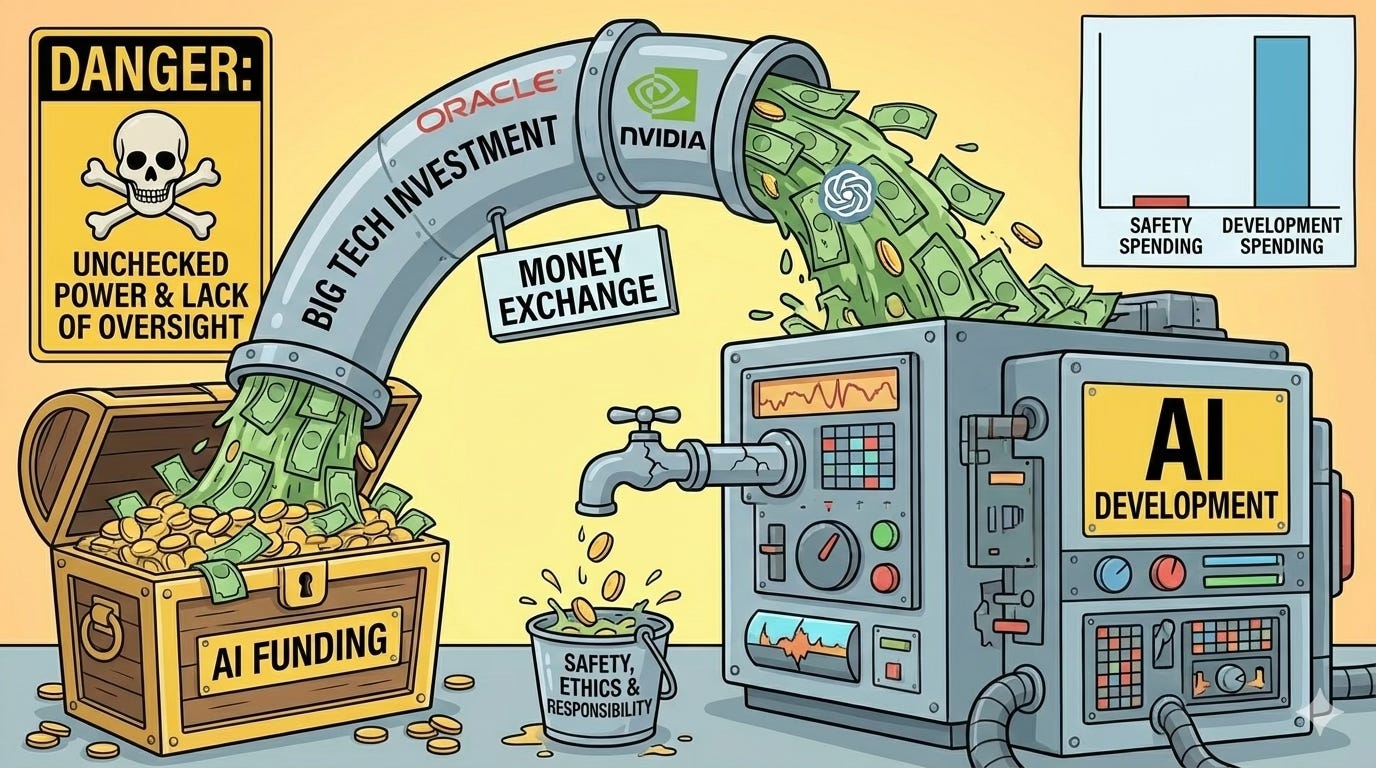

When $300 Billion Buys Everything Except Safety

Oracle, OpenAI, and the art of spending astronomical sums while avoiding the one thing that matters.

In September 2025, Oracle and OpenAI announced a deal worth $300 billion - one of the largest technology contracts in history. The agreement requires 4.5 gigawatts of electricity (enough to power 4 million U.S. homes) and will deploy over 2 million AI chips.

$300 billion is more than New Zealand’s GDP. Instead of, say, solving global hunger, it’s buying data centers so OpenAI can generate better chatbot responses. Meanwhile OpenAI burns $8 billion annually and projects $44 billion in losses through 2028.

So, how much of that $300 billion ensures these systems are safe, responsible, and ethical?

Spoiler: approximately none.

The Numbers Don’t Lie

Let’s establish a baseline. Public sector investment in AI safety research totals around $10 million globally. Meanwhile, companies spend over $100 billion annually building more powerful AI systems.

That’s a ratio of 1:10,000. For every dollar spent ensuring AI is safe, ten thousand dollars go toward making it more powerful.

Open Philanthropy’s AI safety funding, one of the largest sources of safety research grants, averages about $13 million annually. The Frontier Model Forum’s AI Safety Fund supports independent research with grants measured in millions, not billions.

Meanwhile, the Oracle-OpenAI deal alone represents $60 billion per year, which is enough to fund current global AI safety research for 6,000 years. But instead of funding safety research, OpenAI committed separately to spend $60 billion annually on compute and $10 billion developing custom AI chips.

The priorities are clear: make AI bigger, faster, more powerful. Figure out if it’s dangerous later. Maybe. If there’s time.

Stargate: Infrastructure Without Guardrails

The Oracle-OpenAI partnership is part of “Stargate,” OpenAI’s $500 billion AI infrastructure initiative announced at the White House in January 2025. The project includes five new data center sites, bringing total capacity to nearly 7 gigawatts.

The promotional language is impressively grandiose: “create new jobs, accelerate America’s reindustrialization, advance U.S. AI leadership.”

What’s missing? Any mention of safety frameworks, ethical guidelines, or accountability mechanisms. The historic opportunity apparently doesn’t include historically ensuring the technology won’t cause historic problems.

Oracle’s Gamble: Debt-Fueled AI Expansion

Oracle carries over $100 billion in debt as of Q2 FY2026. The company revealed that its 2026 capital expenditures would be $15 billion higher than forecast, bringing the total to $50 billion. When Oracle’s stock plunged, the company insisted everything remains on track.

For this to work, Oracle must rent compute resources from OpenAI at a cost that exceeds the cost of financing hardware and meeting lease obligations. This assumes OpenAI can afford $60 billion annually, even though it generates $10 billion in revenue while burning $8 billion per year.

The math, as they say, is not mathing.

The Safety Spending Gap

While Oracle and OpenAI chase gigawatts and exawatts, AI safety spending remains a rounding error.

Biden’s FY 2025 budget proposal included hundreds of millions for AI efforts, including funding for the U.S. AI Safety Institute and supporting the National AI Research Resource pilot. These are meaningful initiatives, but they’re measured in the hundreds of millions, whereas compute infrastructure is measured in the hundreds of billions.

IBM research found that while 78% of organizations maintain robust documentation and 74% conduct ethical impact assessments, actual implementation often falls short. Having a safety policy and implementing safety practices are apparently very different.

The Future of Life Institute’s 2025 AI Safety Index assessed major AI companies and concluded: “Some companies are making token efforts, but none are doing enough. We are spending hundreds of billions of dollars to create superintelligent AI systems over which we will inevitably lose control.”

The Dangers of the Money Flow

The Oracle-OpenAI-NVIDIA money exchange creates specific risks beyond just under-investment in safety:

Incentive misalignment: OpenAI must generate sufficient revenue to pay Oracle $60 billion annually. This creates enormous pressure to deploy AI systems quickly, at scale, regardless of their readiness. Safety becomes an impediment to survival.

Concentration of power: Nvidia supplies the chips, Oracle provides the infrastructure, and OpenAI builds the models. Three companies control the entire stack for frontier AI, with no regulatory oversight and minimal transparency. When the same entities control hardware, infrastructure, and deployment, who’s checking if safety commitments are actually implemented?

Debt-driven decisions: Oracle’s $100+ billion debt load means the company must make this work. That’s a recipe for “deploy now, fix problems later, hope nothing catastrophic happens.”

Regulatory capture: When you’re committing $300 billion, you can afford significant lobbying. Note that Stargate was announced at the White House with presidential endorsement. Meanwhile, Trump’s executive order eliminated state AI regulations while proposing no federal replacements. Convenient for companies needing regulatory freedom to justify massive investments.

The “too big to fail” problem: Once you’ve invested $300 billion in AI infrastructure, admitting there are fundamental safety problems becomes existential. It is better to minimize concerns, downplay risks, and keep deploying, because turning back would mean acknowledging that the investment was premature.

What Responsible Investment Would Look Like

If this were about safe AI rather than winning an arms race, we would:

Allocate at least 5% of AI budgets to safety, ethics, and governance.

Fund safety research independently from the companies building systems.

Require pre-deployment testingwith results reviewed by AI Safety Institutes.

Mandate the transparent reporting of safety-to-capability spending ratios.

Account for the environmental impact of 4.5 gigawatts.

But none of this happens because none of it helps win the race to deploy first.

The Uncomfortable Parallel

This feels familiar: massive capital investment, promises of revolution, risks dismissed as fear-mongering, regulatory frameworks dismantled, financial structures assuming continued growth.

We’ve seen this before: dot-com bubble, housing crisis, crypto implosions. The difference? When previous bubbles popped, they wiped out wealth but didn’t create existential risks. When AI systems are deployed at a massive scale globally and prove to have fundamental problems, the consequences will not be merely financial.

What This Means Going Forward

AI safety incidents surged 56.4% in 2024, with 233 documented failures. That’s with limited deployment.

The Oracle-OpenAI deal shows the future: massive capital invested in capability development, minimal investment in safety, and decisions driven by financial necessity rather than ethics.

When OpenAI needs $60 billion annually to pay Oracle, every safety concern becomes a business threat. Every delay for testing costs money the company doesn’t have.

That means that they’re rushing the most powerful technology humanity has created, hoping nothing goes catastrophically wrong before you recoup their investment.

Ethicore Advisors Author’s Note

At Ethicore Advisors, I work with organizations asking how much to invest in AI safety versus capabilities.

The Oracle-OpenAI deal provides an answer: invest everything in capabilities, almost nothing in safety, and hope the math works out before the debt comes due.

This isn’t responsible innovation. It’s a bet that we can build superintelligent AI faster than it can cause problems, financed with debt that requires profitable deployment regardless of whether safety research indicates we’re ready.

If $300 billion funds infrastructure but provides no dedicated safety resources, we’re not building the future. We’re financing a very expensive disaster.